Augmenting Local AI with Browser Data: Introducing MemoryCache

The internet was born as a way to connect people and data together around the world. Today, machine learning is upending the way that we interact with data and information. Language and multi-media models seek ever-larger datasets to train on – including the entirety of the internet. There are complex sociological and regulatory questions at play; critical decisions to be made about copyright, safety, transparency, access, and representation.

MemoryCache, a Mozilla Innovation Project, is an early exploration project that augments an on-device, personal model with local files saved from the browser to reflect a more personalized and tailored experience through the lens of privacy and agency.

The way that humans think is uniquely personal to each individual. While individuals share many principles, values and properties of their surrounding communities and organizations, each of us has a unique perspective and set of information that we're exposed to on a regular basis. The process of making new insights from the content we create and consume is not a "one-size-fits-all" opportunity, and machine learning capabilities open up a vast range of new computing advancements and paradigms.

Today, MemoryCache is a set of scripts and simple tools to augment a local copy of privateGPT. The project contains:

- A Firefox extension that acts as a simple "printer" to save pages to a subdirectory in your

/Downloads/folder, and includes the ability to quickly save notes and information from your browser to your local machine - A shell script that listens for changes in the

/Downloads/MemoryCachedirectory and runs the privateGPTingest.pyscript - Code to (optionally) update the Firefox

SaveAsPDFAPI on a local build of Firefox to enable a flag that silently saves webpages as PDF for easier human readability (by default, pages need to be saved as HTML in Firefox)

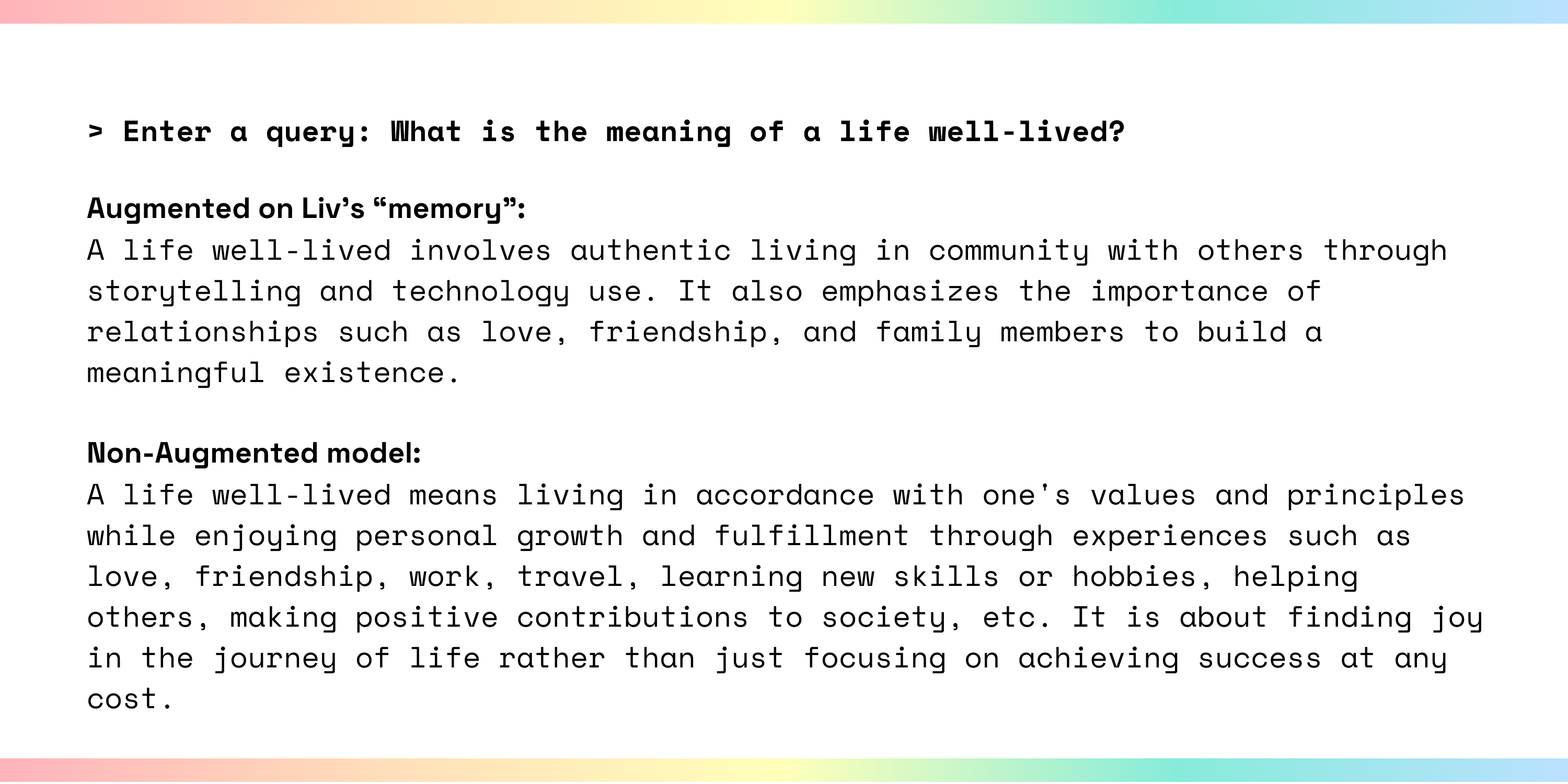

We see MemoryCache as a sandbox for experimenting with some of the quirkier, more unique parts of the brainstorming and idea generation process, all done entirely locally. Our test ground for MemoryCache is a gaming PC with an Intel i7-8700 processor, using Nomic AI's groovy.ggml version of gpt-4-all model. We're using the primordial version of privateGPT, because our preliminary evaluations have found that newer models and versions of the application start to over-generalize the responses even after augmenting the model with personal data – in our environment, 75.3MB of documents saved from the browser and from personal blog posts, notes, and journal entries.

MemoryCache is early, experimental, and a sandbox for exploration. You can follow along with the project on GitHub, or check out our website to stay up to date with our progress!