|

10:30am - 11:00am

|

Registration and check-in

|

|

11:00am - 11:50am

|

Workshop #1 (3 Choices):

|

|

|

Robust and Reliable ML Application Development - This workshop aims to provide an in-depth understanding of CAPSA, a unified framework for quantifying risk in deep neural networks developed by Themis AI, in addition to risk-aware applications built with this framework.

|

|

|

What Is Humane Innovation? - Join Center for Humane Technology Innovation Lead Andrew Dunn for an interactive presentation on building AI with principles from their Foundations course.

|

|

|

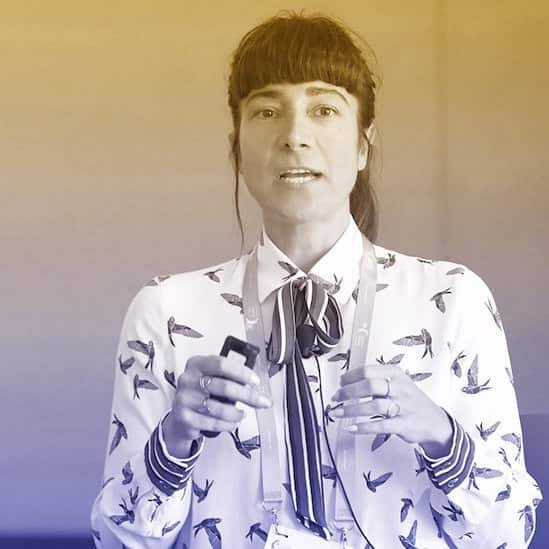

Prototyping Social Norms and Agreements in Responsible AI - In this workshop led by Mozilla Senior Fellow in Trustworthy AI Bogdana Rakova, we’ll explore questions related to AI risks, safeguards, transparency, human autonomy, and digital sovereignty through social, computational, and legal mechanisms by applying a hands-on approach grounded in participant’s projects.

|

|

12:00pm - 12:50pm

|

Lunch

|

|

1:00pm - 1:50am

|

Workshop #2 (4 Choices):

|

|

|

Algorithmic Fairness: A Pathway to Developing Responsible AI Systems - Join Golnoosh Farnadi, Canada CIFAR AI Chair, Mila, as she discusses the importance of fairness and provides an overview of techniques for ensuring algorithmic fairness through the machine learning pipeline while also suggesting questions and future directions for building a responsible AI system.

|

|

|

Operationalizing AI Ethics - Dr. Karina Alexanyan, Director of Strategy at All Tech is Human, Katya Klinova, Head of AI, Labor and the Economy at Partnership on AI, Chris McClean, Director, Global Lead, Digital Ethics at Avanade, Gabriella de Queiroz, Principal Cloud Advocate at Microsoft, and Ehrik Aldana, Tech Policy Product Manager at Credo.ai will share insights and examples of how to be thoughtful and responsible about designing and deploying innovations in AI.

|

|

|

Building Trust into Gen AI: Model Visibility and Tracking Change in Data Distributions - The current generation of Generative AI excels at working directly with humans in human language– and for several reasons this increases the potential for harm. Join Fiddler AI to generate a dataset by collaborating on a toy genAI task and explore techniques for localizing model weaknesses and time-dependent semantic drift.

|

|

|

Wait Wait, Don't push that button!” Human Centered Design for Responsible AI - Join Superbloom for a workshop on human-centered design and AI, where we'll talk about designing for users in responsible ways that take into account challenges, benefits, and what can go wrong.

|

|

2:00pm - 3:20pm

|

Keynote Speakers

|

|

|

Imo Udom - SVP Innovation Ecosystems

|

|

|

Margaret Mitchell - Chief Ethics Scientist, Hugging Face

|

|

|

Gary Marcus - Emeritus Professor of Psychology and Neural Science at NYU

|

|

|

Kevin Roose - Speaker, reporter and author of Futureproof

|

|

3:35pm - 5:40pm

|

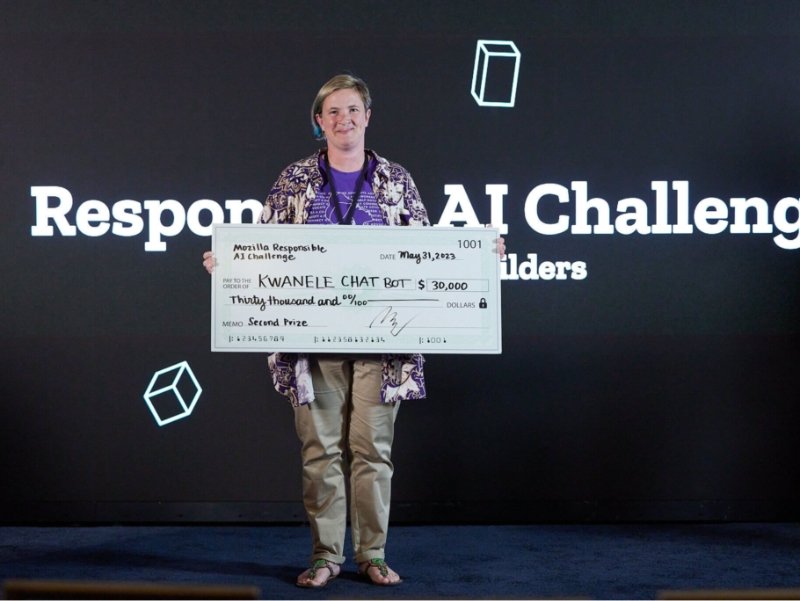

Responsible AI Challenge Final Rounds

|

|

|

3-5 minute pitch + 5 minutes Q&A per team

|

|

|

Awards and prizes!

|

|

5:40pm - 7:30pm

|

Closing Reception

|