llamafile: bringing LLMs to the people, and to your own computer

Introducing the latest Mozilla Innovation Project llamafile, an open source initiative that collapses all the complexity of a full-stack LLM chatbot down to a single file that runs on six operating systems. Read on as we share a bit about why we created llamafile, how we did it, and the impact we hope it will have on open source AI.

Today, most people who are using large language models (LLMs) are doing so through commercial, cloud-based apps and services like ChatGPT. Many startups and developers are doing the same, building applications or even entire companies on top of APIs provided by companies like OpenAI. This raises many important questions about privacy, access, and control. Who is “listening” to our chat conversations? How will our data be used? Who decides what kinds of questions a model will or won’t answer?

If we collectively do nothing, the default outcome is that this new dawning era of AI will be dominated by a handful of powerful tech companies. If this happens, the above questions will be difficult or impossible to answer. We will simply have to “trust” for-profit corporations to do the right thing. The history of computing and the Web suggests that we should have a plan B.

At Mozilla, we believe that open source is one of the most powerful answers to this problem. Just as open source has been key to Mozilla’s ongoing efforts to fight for a free and open Web, it can also play a critical role in ensuring that AI remains free and open. It can do this by opening the “black box” of AI and letting the people peer inside. The beating heart of open source is transparency. If you can fully inspect a technology, then you can understand it, change it, and control it. In this way, the transparency afforded by open source AI can increase both trust and safety. It can increase competition by lowering barriers to entry and increasing user choice. And it can put the technology directly into the hands of the people.

The good news is that open source AI has made enormous strides over the past year. Starting with Meta’s release of their LLaMA model, there’s been a Cambrian Explosion of “open” models. In a pattern reminiscent of Moore’s Law, it often feels like each new model is better and faster while also being smaller than the last. And while there is much debate on whether or not these models are truly “open source,” they are generally more open and transparent than the commercial options. There has also been a renaissance of innovation in open source AI software: inference run-times, UIs, orchestration tools, agents, and training tools.

Unfortunately, things are harder than they should be in the open source AI world. It’s not easy to get up and running with an open source LLM stack. Depending on the toolset you choose, you may need to clone GitHub repositories, install rafts of Python dependencies, use a specific version of Nvidia’s SDK, compile C++ code, and so on. Meanwhile, things are evolving so fast in this space that instructions and tutorials that worked yesterday may be obsolete tomorrow. Even the file formats for open LLMs have been changing rapidly. In short, using open source AI requires a lot of specialized knowledge and dedication.

But what if it didn’t? What if using open source AI was as simple as double-clicking an app? How many more developers could then work with this technology and participate in its growth as a viable alternative to commercial products? And how many everyday end users could adopt open source solutions instead of closed source ones?

Mozilla wants to answer these questions, so we started thinking and looking around. Quickly, we found two amazing open source projects that could, together, fit the bill:

llama.cpp is an open source project that was started by Georgi Gerganov. It accomplishes a rather neat trick: it makes it easy to run LLMs on consumer grade hardware, relying on the CPU instead of requiring a high-end GPU (although it’s happy to use your GPU, if you have one). The results can be astonishing: LLMs running on everything from Macbooks to Raspberry Pis, churning out responses at surprisingly usable speeds.

Cosmopolitan is an open source project created by Justine Tunney. It accomplishes another neat trick: it makes it possible to distribute and run programs on a wide variety of operating systems and hardware architectures. This means you can compile a program once, and then the resulting executable can be used on nearly any kind of modern computer and it will just… work!

We realized that by combining these two projects, we could collapse all the complexity of a full-stack LLM chatbot down to a single file that would run anywhere. With her deep knowledge both of Cosmopolitan and llama.cpp, Justine was uniquely suited to the challenge. Plus, Mozilla was already working with Justine through our Mozilla Internet Ecosystem (MIECO) program, which actually sponsored her work on the most recent version of Cosmopolitan. We decided to team up.

A month later, and thanks to Justine’s sublime engineering talents, we’ve launched llamafile!

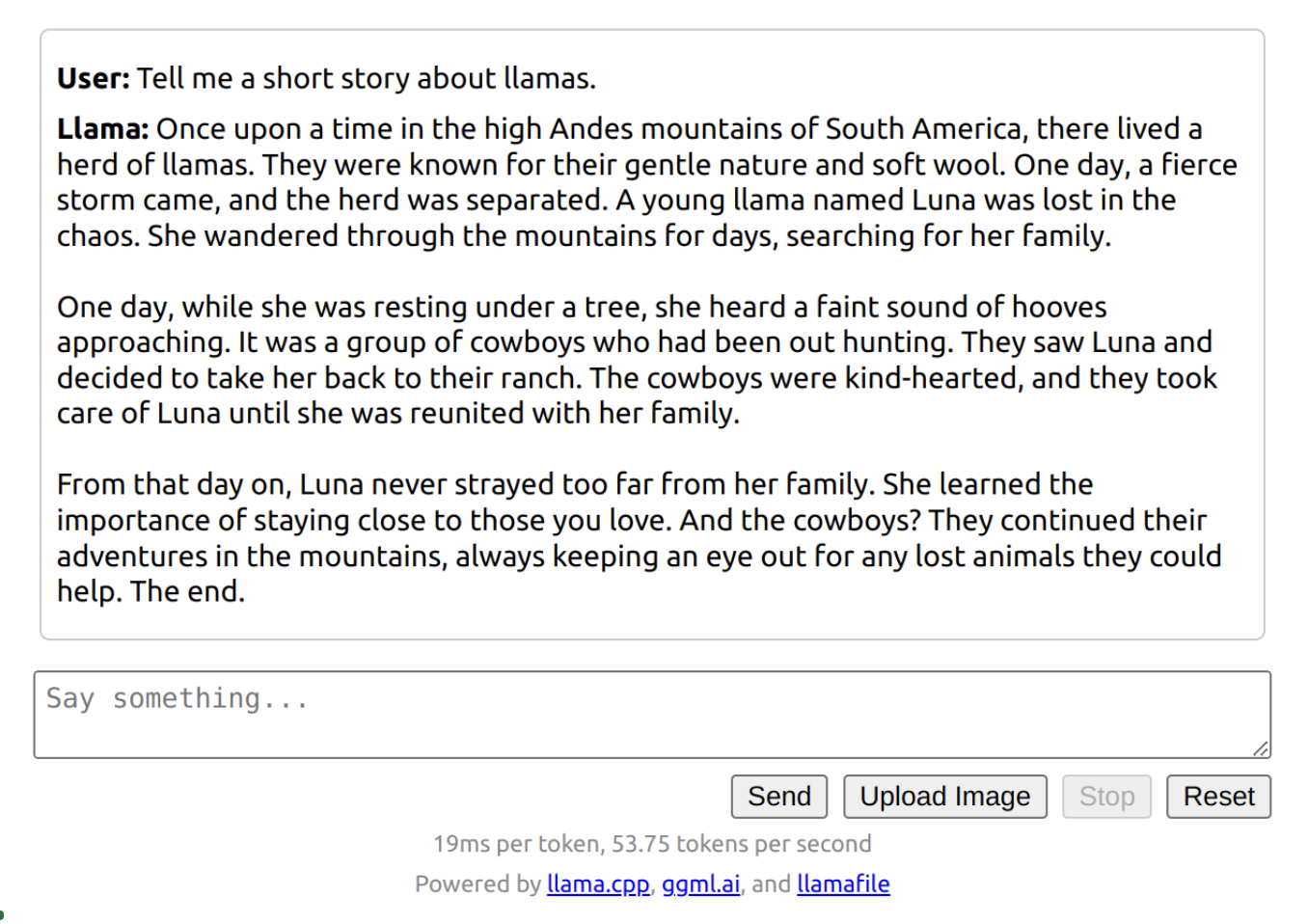

llamafile turns LLMs into a single executable file. Whether you’re a developer or an end user, you simply choose the LLM you want to run, download its llamafile, and execute it. llamafile runs on six operating systems (Windows, macOS, Linux, OpenBSD, FreeBSD, and NetBSD), and generally requires no installation or configuration. It uses your fancy GPU, if you have one. Otherwise, it uses your CPU. It makes open LLMs usable on everyday consumer hardware, without any specialized knowledge or skill.

We believe that llamafile is a big step forward for access to open source AI. But there’s something even deeper going on here: llamafile is also driving what we at Mozilla call “local AI.”

Local AI is AI that runs on your own computer or device. Not in the cloud, or on someone else’s computer. Yours. This means it’s always available to you. You don’t need internet access to use a local AI. You can turn off your WiFi, and it will still work.

It also means the AI is fully under your control and that’s something no one can ever take away from you. No one else is listening in to your questions, or reading the AI’s answers. No one else can access your data. No one can change the AI’s behavior without your knowledge. The way the AI works now is the way it will always work. To paraphrase Simon Willison’s recent observation, you could copy a llamafile to a USB drive, hide it in a safe, and then dig it out years from now after the zombie apocalypse. And it will still work.

We think that local AI could well play a critical role in the future of computing. It will be a key means by which open source serves as a meaningful counter to centralized, corporate control of AI. By running offline and on consumer-grade hardware, it will help bring AI technology to everyone, not just those with high-end devices and high-speed Internet access. We foresee a (near) future where very small, efficient models that run on lower-spec devices provide powerful capabilities to people everywhere, regardless of their connectivity. We built llamafile to support the open source AI movement, but also to enable local AI. We believe in both of these opportunities, and you’ll see Mozilla do more in the future to support them.

Whether you’re a developer or just LLM-curious, we hope you’ll give llamafile a chance and let us know what you think!